AgentDress: Realtime Clothing Synthesis for Virtual Agents using Plausible Deformations

IEEE Transactions on Visualization and Computer Graphics (Special Issue of ISMAR 2021), 2021, 27(11): 4107-4118.

Nannan Wu, Qianwen Chao, Yanzhen Chen, Weiwei Xu, Chen Liu, Dinesh Manocha, Wenxin Sun, Yi Han, Xinran Yao, Xiaogang Jin

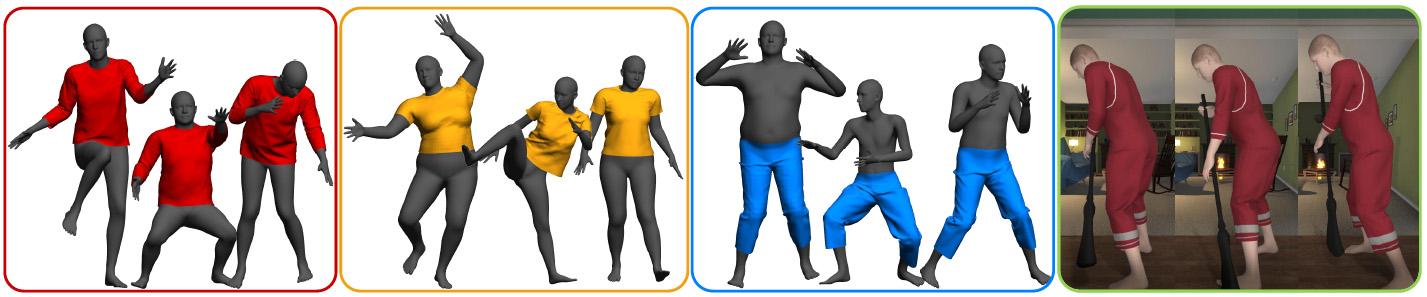

Our method can generate real-time clothing animation results with detailed wrinkles for various body poses and shapes with different clothing types on a commodity CPU. Our method can also be applied in VR scenarios (right image).

Abstract

We present a CPU-based

real-time cloth animation method for dressing virtual humans of various

shapes and poses. Our approach formulates the clothing deformation as a

high-dimensional function of body shape parameters and pose parameters. In

order to accelerate the computation, our formulation factorizes the clothing

deformation into two independent components: the deformation introduced by

body pose variation (Clothing Pose Model) and the deformation from body

shape variation (Clothing Shape Model). Furthermore, we sample and cluster

the poses spanning the entire pose space and use those clusters to

efficiently calculate the anchoring points. We also introduce a

sensitivity-based distance measurement to both find nearby anchoring points

and evaluate their contributions to the final animation. Given a query shape

and pose of the virtual agent, we synthesize the resulting clothing

deformation by blending the Taylor expansion results of nearby anchoring

points. Compared to previous methods, our approach is general and able to

add the shape dimension to any clothing pose model. Furthermore, we can

animate clothing represented with tens of thousands of vertices at 50+ FPS

on a CPU. We also conduct a user evaluation and show that our method can

improve a user’s perception of dressed virtual agents in an immersive

virtual environment (IVE) compared to a realtime linear blend skinning

method.