Automatic Embroidery Texture Synthesis for Garment Design and Online Display

The Visual Compiter (Special Issue of CGI' 2021), 2021, 37(9-11): 2553-2565.

Xinyang Guan, Likang Luo, Honglin Li, He Wang, Chen Liu, Su Wang and Xiaogang Jin

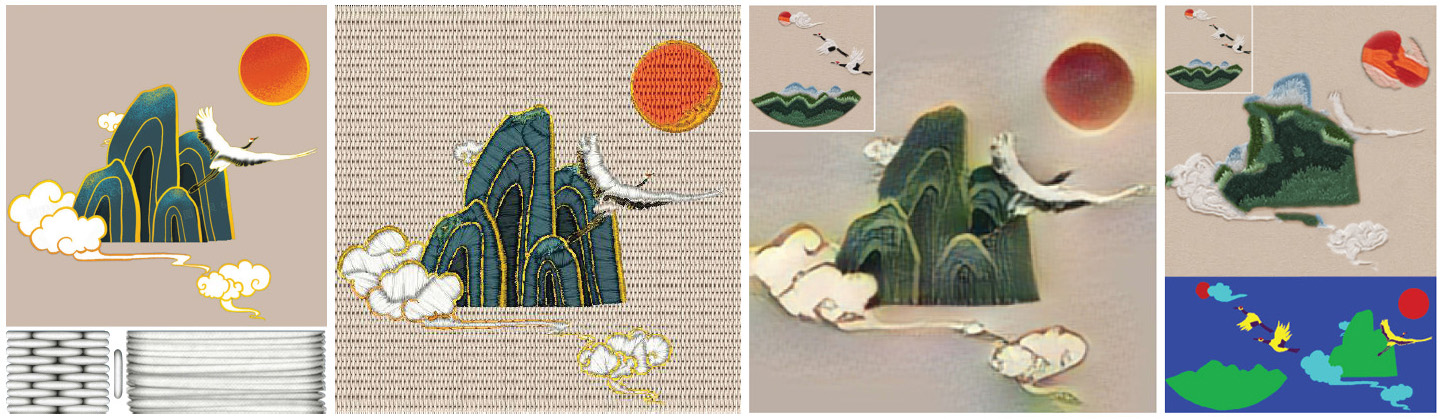

(a) (b) (c) (d)

Given an input image and some reference textures, our method is able to convert the input into an embroidery artwork automatically. (a) The original image along with three reference textures (bottom, from left to right: reference texture for the long-short stitch, the edge stitch, and the satin stitch, respectively) used by our system, (b) our result, (c) result of neural style transfer with the reference image in the top-left corner, and (d) result of patch-based synthesis, bottom images are its masks, and the reference image is in the top-left corner.

Abstract

We introduce an

automatic texture synthesis based framework to convert an arbitrary input

image into embroidery style art for garment design and online display. Given

an input image and some reference textures, we first extract key embroidery

regions from the input image using image segmentation. Each segmented region

is single-colored and labeled with a stitch style automatically. We then

fill these regions with embroidery reference textures via a

stitch-style-based texture synthesis method. For each region, our approach

maintains color similarity before and after synthesis, along with stitch

style consistency. Compared to existing approaches, our method is able to

generate digital embroidery patterns with faithful details automatically.

Moreover, it can accept diverse input images effectively, enabling a fast

preview of the embroidery patterns synthesized on digital garments

interactively, and therefore accelerating the workflow from design to

production. We validate our method through extensive experimentation and

comparison.