HairMapper: Removing Hair from Portraits Using GANs

2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR’2022), June, pp. 4227-4236.

Yiqian Wu, Yongliang Yang, and Xiaogang Jin

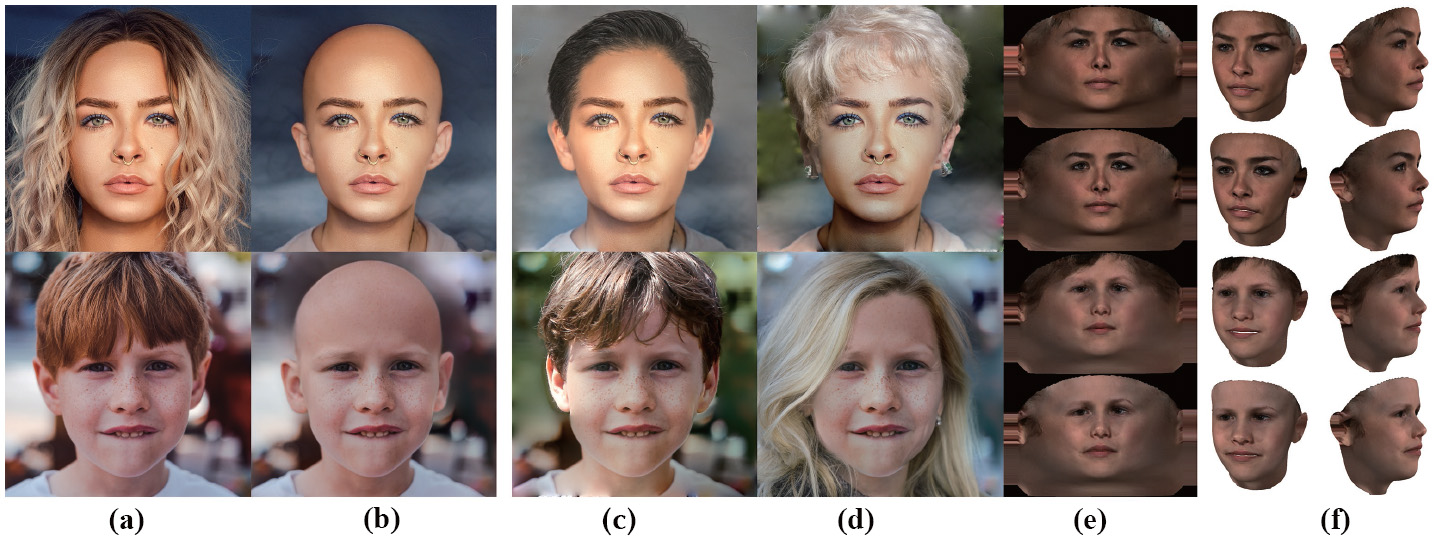

Given portrait images with their faces partially occluded by hair (a), our method is able to generate portraits without hair while preserving facial identity (b). After removing the effects of hair, the resulting portrait images can be well employed in hair design by simply blending the clean face with some hairstyle templates (c and d) without the interference from existing hair. Our results can also benefit 3D face reconstruction by using the clean face textures (e, f) generated by our method (rows 2 and 4) in contrast to the results of the original images (rows 1 and 3).

Abstract

Removing hair from

portrait images is challenging due to the complex occlusions between hair

and face, as well as the lack of paired portrait data with/without hair. To

this end, we present a dataset and a baseline method for removing hair from

portrait images using generative adversarial networks (GANs). Our core idea

is to train a fully connected network HairMapper to find the direction of

hair removal in the latent space of StyleGAN for the training stage. We

develop a new separation boundary and diffuse method to generate paired

training data for males, and a novel “female-male-bald” pipeline for paired

data of females. Experiments show that our method can naturally deal with

portrait images with variations on gender, age, etc. We validate the

superior performance of our method by comparing it to state-of-the-art

methods through extensive experiments and user studies.

We also demonstrate its applications in hair design and 3D face

reconstruction.